RSAC 2026 recap: Moving passkeys from direction to execution

RSAC 2026 highlighted how real success with passkeys depends on strong implementation, UX, and evolving...

Passkeys for banking: A conversation with CyberRisk TV

A recap of CyberRisk TV at RSAC 2026 on passkeys for banking: Why adoption is accelerating, what CISOs...

The eSignature Newsletter for April 2026

OneSpan Sign integrates with Workato, new digital identity regulations, and eSignature integrations 101

OneSpan Sign integration for Workato: Low-code eSignature automation

Automate document signing workflows in Workato with the OneSpan Sign integration. No code, no manual...

Why European banks must act now on EUDI Wallets: Regulatory drivers, deadlines, and current ambiguities

Learn about the requirements, regulations, and major deadlines for banks to adopt EUDI Wallets for...

PSD3 updates: Deep dive on fraud prevention, bank liability, and the regulatory impact

Get PSD3 PSR updates today to prepare your compliance. Learn about bank liability, news for PSPs, and...

The Authentication Newsletter for January 2026

Explore 2026 predictions, regulatory updates, fraud prevention tips, the future of authentication, and...

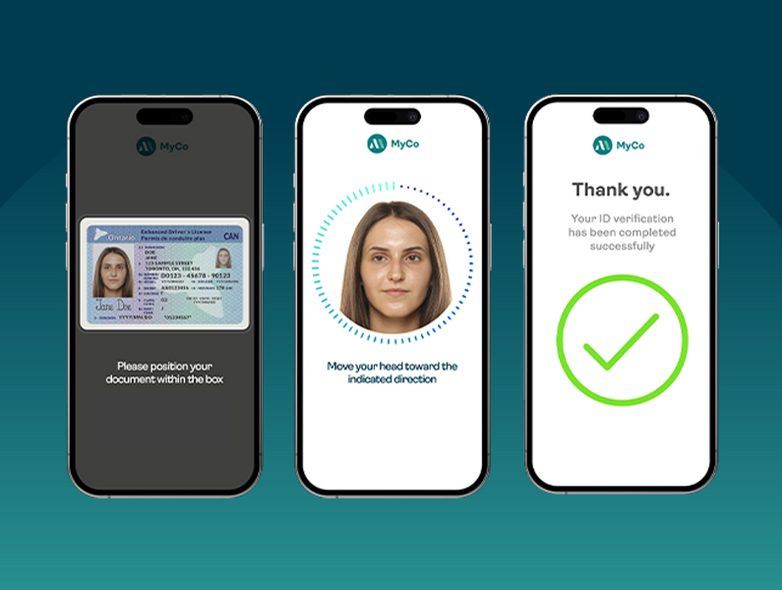

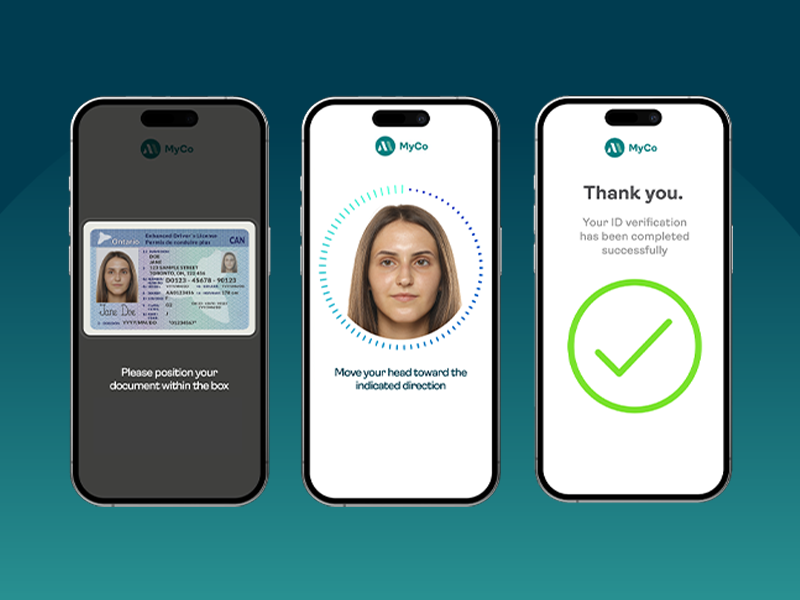

FINTRAC: Preparing your identity verification strategy for more than just compliance

FINTRAC-ready vs FINTRAC-optimized? Learn how the right approach to identity verification facilitates...